Our latest webinar series deals with one of the hottest topics of the moment: Artificial Intelligence (AI).

The first webinar in the All Things AI: The good, the bad and the ugly series focused on the Rise of AI. CEO.digital’s Craig McCartney was joined by AI enthusiasts David Skerrett and Jamshid Alamuti for an introduction to what AI is, where it’s come from and where’s it’s going. We also opened up the discussion to our audience.

Through a series of polls, we asked attendees to vote on whether various AI use cases where good, bad or just plain ugly. Here’s a quick round-up of everything we discussed on the Rise of AI.

What Is Artificial Intelligence?

For David, Artificial Intelligence is the “incorporation of human intelligence into machines” and is classified into 2 groups: general and narrow. Narrow AI is task-specific, meaning it is good at only completing a specific activity such as classifying images.

But general AI is the future, and refers to a machine’s ability to demonstrate intelligent problem solving.

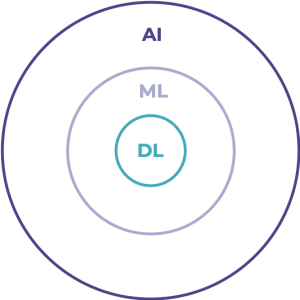

Machine Learning (ML) is a subset/technique of AI, and is classified as “empowering computer systems to learn through data to make predictions”.

Deep Learning (DL) is a subset/technique of ML, inspired by information processing patterns in the human brain involving detection and comparison.

In partnership, these three terms make up what David calls the “scotch egg” of AI.

The Evolution of AI

While AI as a term isn’t new – it was first coined in 1956 – it is only recently that we have developed technology powerful enough to fuel true AI. Thanks to new hardware, datapoints and accessible software solutions, a new age of AI is unfolding. We now even carry complex AIs with us everywhere we go, in our pockets, homes, cars and workplaces. In fact, algorithms now power the experiences and content we digest on a daily basis. That means it is fast becoming a business imperative – not a nice-to-have:

- 83% of businesses say AI is a strategic priority for their businesses today

- 77% of CEOs say AI will increase vulnerability and disruption to the way they do business

- 63% of businesses say pressure to reduce costs will require them to use AI

However, that isn’t to say that AI is easy to implement:

- Just 47% of digitally mature organisations say they have a defined AI strategy

- Only 23% of businesses have incorporated AI into processes and product/service offerings today

Of course, for the larger tech organisations like Facebook, Apple, Netflix and Google, AI is already being deployed and is curating all types of content for users to enjoy. The onus is now on all other businesses to catch up.

David finished the first section of the webinar by pointing out that while AI is getting more powerful and more necessary, it isn’t taking over jobs – it is enhancing them.

The share of jobs requiring AI skills has grown 4.5 times over since 2013. Investment, meanwhile, has seen a 6x increase since 2000, and revenues are expected to rise from $1.6bn in 2018 to $31bn in 2025.

How old is Artificial Intelligence, really?

Jamshid was next up, and he was keen to point out that although AI as a term and field is over 60 years old, we shouldn’t think that AI is mature. It’s more “like a baby”.

In the 1950s, pioneering thinkers like John McCarthy, Marvin Minsky and Herbert Simon followed in Alan Turing’s footsteps to lay the foundations for AI. They wanted to create a machine that could imitate the human brain, believing that any imitation of the human brain would automatically mean the machine was intelligent.

But this wasn’t the case. Unfortunately, a machine that is similar in structure to a human brain isn’t necessarily intelligent because it doesn’t have the vast wealth of knowledge to draw upon that a human does.

What that means is we needed to find a way to collect and store a lot of data to feed our AIs. But although we may have given birth to AI as long ago as the 1950s, we’ve only just managed to collect enough data to feed our AIs with the knowledge they need to thrive.

For instance, algorithms that were first proposed in the 1980s and 1990s didn’t have the relevant datasets to function until much later. Even still, when those datasets became available it was several years until enough data had been collected to feed the AI properly.

The Q-learning algorithm that powers Google’s Deepmind was first proposed in 1992, but the dataset the algorithm needed didn’t arrive until 2013. The breakthrough for Deepmind didn’t come until two years later.

Similarly, the negascount planning algorithm was first proposed in 1983, but the dataset that would power the algorithm and allow IBM’s Deep Blue to defeat chess world champion Garry Kasparov in 1997 didn’t become available until 1991.

Educating the Public on AI

For AI champions, the future looks bright. We are now able to collect data faster and thus our AIs are performing better, faster. But the public is worried about what AI means for them. Jamshid was eager to reiterate David’s point: AI isn’t here to takeover people’s jobs, it’s here to takeover tasks. But Jamshid says we still have some way to go until AI’s full capabilities are realised. We need to get people onside now.

We must educate the public, which currently fears AI, to show them that AI can be beneficial to society.

But that’s not all. We also need to work out an ethical way of using AI, as some harrowing stories in the press tell us. For instance, some police forces around the world are now using AI to predict crime – and getting it wrong.

Here, African Americans have been mistakenly flagged as potential criminals due to biased data that has warped AI decision-making. We must be careful to eliminate bias like this from the data we teach AI to ensure we truly win the public’s support.

The Good, the Bad and the Ugly

Once the main part of the webinar had ended, we opened up the discussion to our audience. We highlighted a series of AI use cases and asked the audience to rate them as good, bad or ugly. Here are our audience’s judgements on everything from Deep Fakes to AI as a source of global pollution…

ZAO Deep Fakes

The Chinese app ZAO allows users to use AI deep fake technology to superimpose their face on celebrities. Users simply upload images of their face and the app makes them the star of their favourite shows. But the app came with worrying and aggressive terms and conditions that sparked a host of privacy concerns.

AI-Empowered Cancer Treatment

AI technology is starting to revolutionise healthcare. Data scientists, statistical and machine learning researchers, and cancer informatic experts are looking to AI as a way to enable precision oncology. AI is making it easier to find the best drug to treat an individual.

Tackling Air Pollution

In China, IBM launched a project called Green Horizon, which uses AI to predict how bad pollution will be in Beijing up to 72 hours in advance. With adaptive machine learning, the system determines the best combination of models and data sources to predict and track pollution. Eventually, the system could drive remedies to resolve unacceptable pollution levels.

AI to Predict Future Crimes of Suspects

Some countries already use data to assess how risky it is to release a suspect, but some researchers are now looking to predict who will commit crimes in the future too. The AI draws on public safety videos and image analysis, DNA analysis, gunshot detection, and crime forecasting. While the AI use case sounds positive by promoting safety, it is deeply flawed. The algorithms are twice as likely to incorrectly flag black suspects as future criminals than white suspects, and white suspects are incorrectly classified as low-risk more often than black suspects.

Inventing a New Sport

Some AI use cases are being used to solve creative problems like a human. In some instances, this has resulted in surprising results, like the creation of a new sport – Speedgate. Created by a text-generating algorithm, the AI analysed 400 existing sports and generated over 1,000 ideas to create a new sport. Speedgate is part croquet, part rugby, part soccer, and is the world’s first sport imagined by an AI.

AI as a Source of Pollution on a Global Level

AI isn’t just a solution to many tasks – in some instances, it is also a problem in itself. Training AI is extremely energy intensive. The amount of carbon dioxide produced to train each fully trained AI is more than 284 tonnes, more than five times the lifetime emissions of the average car.